Generate-Text (Index of Posts):

This categoy of tools aims to generate language text pieces for certain purposes, such as to fool a neural language classifier..

This includes:

12 May 2020

Title: TextAttack: A Framework for Adversarial Attacks in Natural Language Processing

Abstract

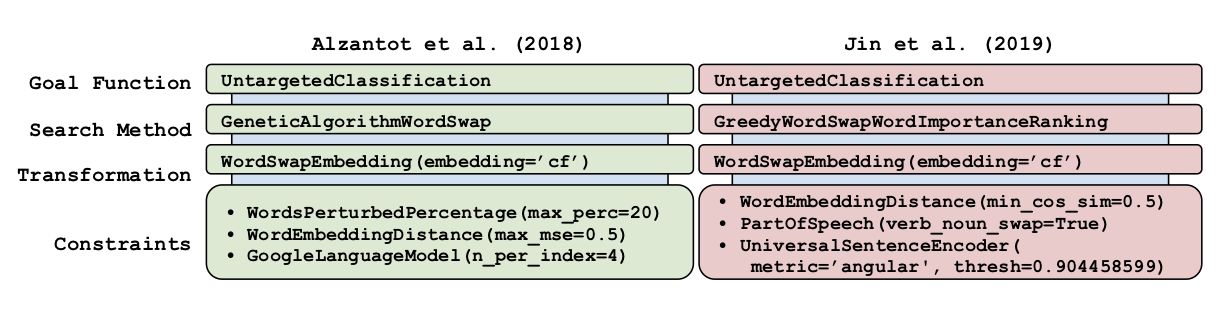

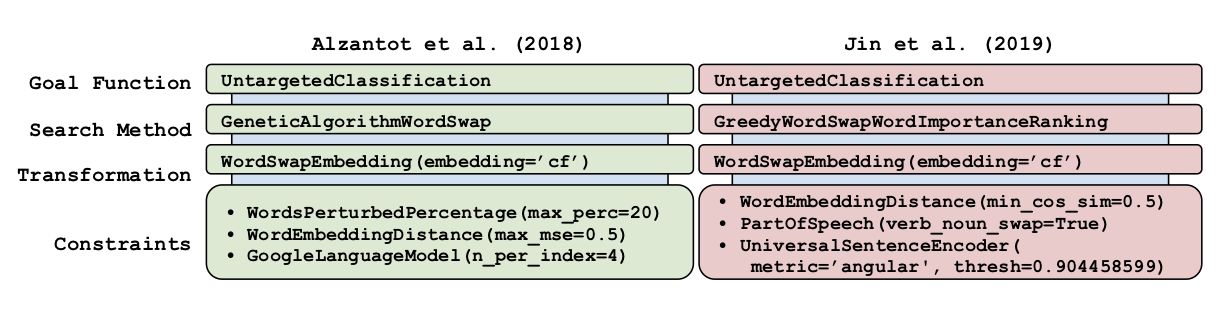

TextAttack is a library for generating natural language adversarial examples to fool natural language processing (NLP) models. TextAttack builds attacks from four components: a search method, goal function, transformation, and a set of constraints. Researchers can use these components to easily assemble new attacks. Individual components can be isolated and compared for easier ablation studies. TextAttack currently supports attacks on models trained for text classification and entailment across a variety of datasets. Additionally, TextAttack’s modular design makes it easily extensible to new NLP tasks, models, and attack strategies. TextAttack code and tutorials are available at this https URL.

It is a Python framework for adversarial attacks, data augmentation, and model training in NLP.

Citations

@misc{morris2020textattack,

title={TextAttack: A Framework for Adversarial Attacks in Natural Language Processing},

author={John X. Morris and Eli Lifland and Jin Yong Yoo and Yanjun Qi},

year={2020},

eprint={2005.05909},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

Having trouble with our tools? Please contact Ji Gao and we’ll help you sort it out.

01 Oct 2018

Title: Black-box Generation of Adversarial Text Sequences to Fool Deep Learning Classifiers

Its shorter version was Published @ 2018 IEEE Security and Privacy Workshops (SPW), co-located with the 39th IEEE Symposium on Security and Privacy.

TalkSlide: URL

Abstract

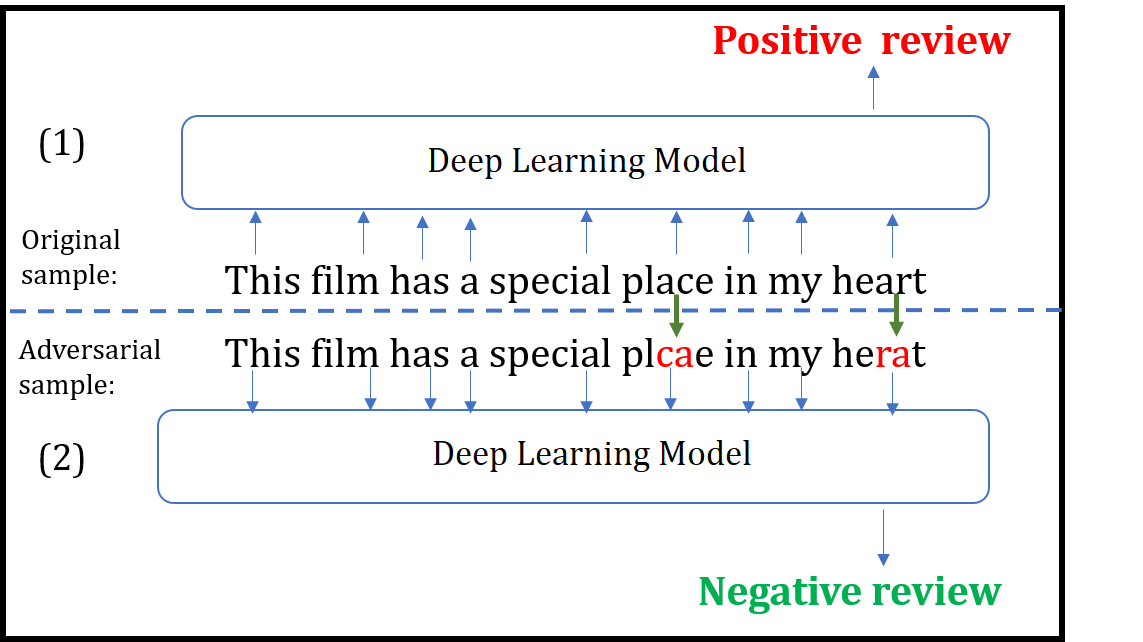

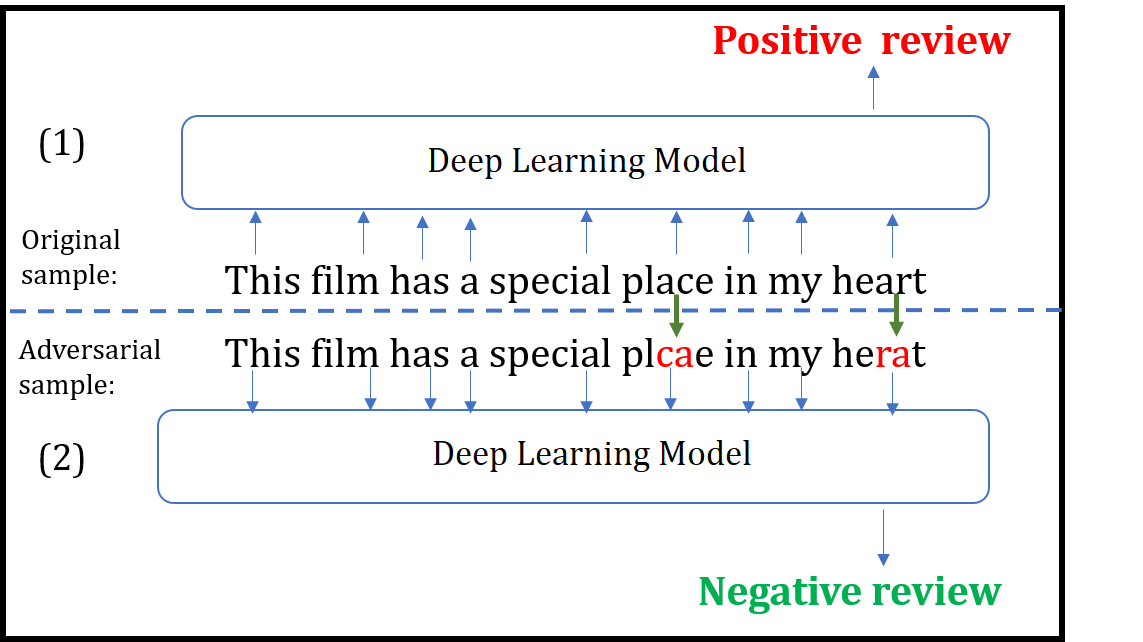

Although various techniques have been proposed to generate adversarial samples for white-box attacks on text, little attention has been paid to a black-box attack, which is a more realistic scenario. In this paper, we present a novel algorithm, DeepWordBug, to effectively generate small text perturbations in a black-box setting that forces a deep-learning classifier to misclassify a text input. We develop novel scoring strategies to find the most important words to modify such that the deep classifier makes a wrong prediction. Simple character-level transformations are applied to the highest-ranked words in order to minimize the edit distance of the perturbation. We evaluated DeepWordBug on two real-world text datasets: Enron spam emails and IMDB movie reviews. Our experimental results indicate that DeepWordBug can reduce the classification accuracy from 99% to around 40% on Enron data and from 87% to about 26% on IMDB. Also, our experimental results strongly demonstrate that the generated adversarial sequences from a deep-learning model can similarly evade other deep models.

Citations

@INPROCEEDINGS{JiDeepWordBug18,

author={J. Gao and J. Lanchantin and M. L. Soffa and Y. Qi},

booktitle={2018 IEEE Security and Privacy Workshops (SPW)},

title={Black-Box Generation of Adversarial Text Sequences to Evade Deep Learning Classifiers},

year={2018},

pages={50-56},

keywords={learning (artificial intelligence);pattern classification;program debugging;text analysis;deep learning classifiers;character-level transformations;IMDB movie reviews;Enron spam emails;real-world text datasets;scoring strategies;text input;text perturbations;DeepWordBug;black-box attack;adversarial text sequences;black-box generation;Perturbation methods;Machine learning;Task analysis;Recurrent neural networks;Prediction algorithms;Sentiment analysis;adversarial samples;black box attack;text classification;misclassification;word embedding;deep learning},

doi={10.1109/SPW.2018.00016},

month={May},}

Having trouble with our tools? Please contact Yanjun Qi and we’ll help you sort it out.